|

|

|

|

|

|

|

|

|

|

| 2004 - 2010 | 2010 - 2013 | June-Aug 2015 | June-Aug 2016 | June-Aug 2017 | Aug 2017 - May 2018 | 2013 - 2018 | 2018 - now |

I am a senior research scientist at NVIDIA Seattle Robotics

Lab. I am interested in using computer vision and 3D vision for robotics tasks such as object manipulation. At

the moment, I am mainly working on model-free object manipulation in unconstrained environments. In addition, I am

interested in 6D pose estimation of objects, instance segmentation, and recognition from RGB-D images. Prior to

NVIDIA, I finished my PhD in Computer Science

department at George Mason University

where I was working under the supervision of Prof. Jana Kosecka. During

my PhD, I worked on a variety of computer vision problems for robot perception such as: vision based navigation,

object pose estimation, object detection, semantic segmentation and image based localization. I was fortunate to

work with amazing collaborators in different research groups in industry. I did internships at Google Brain

Robotics, Zoox, and Google StreetView during my PhD. Prior to my PhD, I got my masters in AI and Robotics from

University of Tehran.

News:

- March 2022: 1 paper on zero-shot object rearrangement accepted to CVPR 2022.

- September 2021: 3 papers accepted to CoRL.

- July 2021: Got promoted to Senior Robotics Research Scientist.

- June 2021: Our paper on Reactive Human-to-Robot Handovers of Arbitrary Objects won the best paper award on Human Robot Interaction at ICRA 2021.

- June 2021: 1 paper accepted to IROS 2021.

- May 2021: 1 paper accepted to RSS 2021.

- March 2021: 1 paper accepted to CVPR 2021.

- March 2021: 5 papers accepted to ICRA 2021.

- September 2020: Our paper on instance sementation of unknown objects is accepted to CoRL 2020.

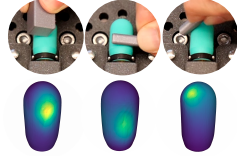

- May 2020: Our paper on interpreting signals from touch sensors is accepted to RSS 2020.

- May 2020: Our paper on grasping unkonwn objects in clutter is nominated for Best Robot Manipulation and Student Paper award.

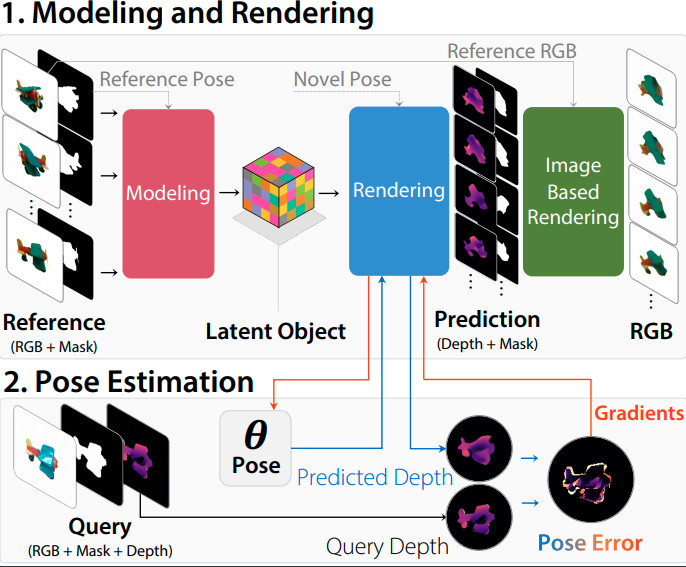

- February 2020: Our paper on zero-shot pose estimation is accepted to CVPR 2020.

- January 2020: 2 papers accepted to ICRA 2020.

- October 2019: got promoted to Senior Robotics Research Scientist.

- September 2019: Our paper on evaluating different grasp sampling schemes is accepted to ISRR 2019.

- September 2019: Our paper on instance segmentation for unknown object got accepted to CoRL 2019.

- July 2019: Our paper on 6-DOF grasping of unknown objects got accepted to ICCV 2019.

- May 2019: Our paper got accepted to RSS 2019.

- January 2019: Our paper got accepted to ICRA 2019.

- August 2018: Joined NVIDIA Robotics lab as Research Scientist.

- June 2018: I defended my dissertation and graduated from PhD program.

- May 2018: Received outstanding graduate student award in CS department.

- I will be joining Google Brain team as visiting researcher for academic year of 2017.

- April 2017: Our paper got accepted to RSS 2017 conference.

- February 2017: Our paper is covered by IEEE Spectrum and Forbes.

- February 2017: Our paper got accepted to CVPR 2017 conference.

- September 2016: Two papers got accepted in 3DV 2016 conference.

- March 2016: Second place in Computer Science PhD Symposium of George Mason University.

Publications:

|

ProgPrompt: Generating Situated Robot Task Plans using Large Language Models

|

||

|

MegaPose: 6D Pose Estimation of Novel Objects via Render & Compare

|

||

|

Learning Robust Real-World Dexterous Grasping Policies via Implicit Shape Augmentation

|

||

|

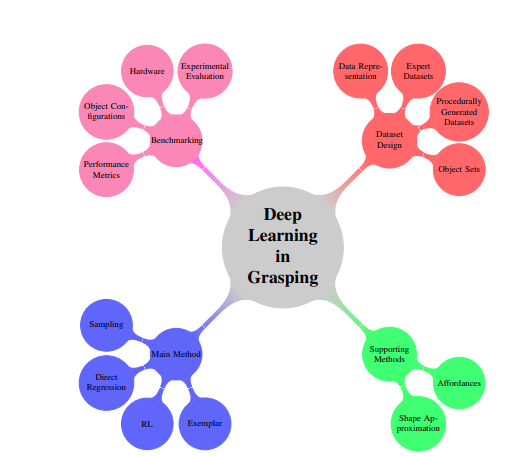

Deep Learning Approaches to Grasp Synthesis: A Review

|

||

|

IFOR: Iterative Flow Minimization for Robotic Object Rearrangement

|

||

|

RICE: Refining Instance Masks in Cluttered Environments with Graph Neural Networks

|

||

|

STORM: An Integrated Framework for Fast Joint-Space Model-Predictive Control for

Reactive

Manipulation

|

||

|

Goal-Auxiliary Actor-Critic for 6D Robotic Grasping with Point Clouds

|

||

|

NeRP: Neural Rearrangement Planning for Unknown Objects

|

||

|

Reactive Long Horizon Task Execution via Visual Skill and Precondition

Models

|

||

|

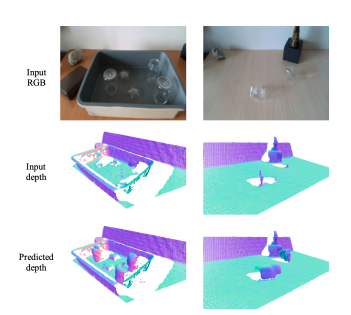

RGB-D Local Implicit Function for Depth Completion of Transparent

Objects

|

||

|

Contact-GraspNet: Efficient 6-DoF Grasp Generation in

Cluttered

Scenes

|

||

|

Object Rearrangement Using Learned Implicit Collision

Functions

|

||

|

Reactive Human-to-Robot Handovers of Arbitrary Objects

Best Human Robot Interaction

Paper |

||

|

ACRONYM: A Large-Scale Grasp Dataset Based on

Simulation

|

||

|

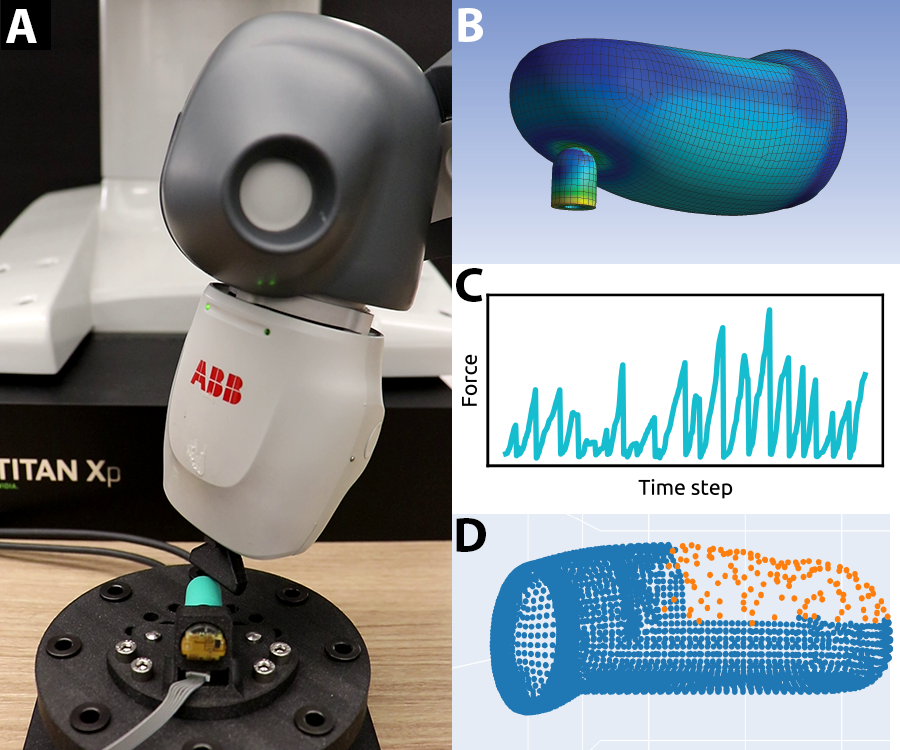

Sim-to-Real for Robotic Tactile Sensing via

Physics-Based

Simulation and Learned Latent Projections

|

||

|

Learning RGB-D Feature Embeddings for

Unseen

Object

Instance

Segmentation

|

||

|

Interpreting and Predicting Tactile

Signals

via a

Physics-Based and Data-Driven

Framework

|

||

|

LatentFusion: End-to-End

Differentiable

Reconstruction

and Rendering for Unseen Object

Pose

Estimation

|

||

|

6-DOF Grasping for

Target-driven

Object

Manipulation in Clutter

Best Robot

Manipulation

Paper

Finalist |

||

|

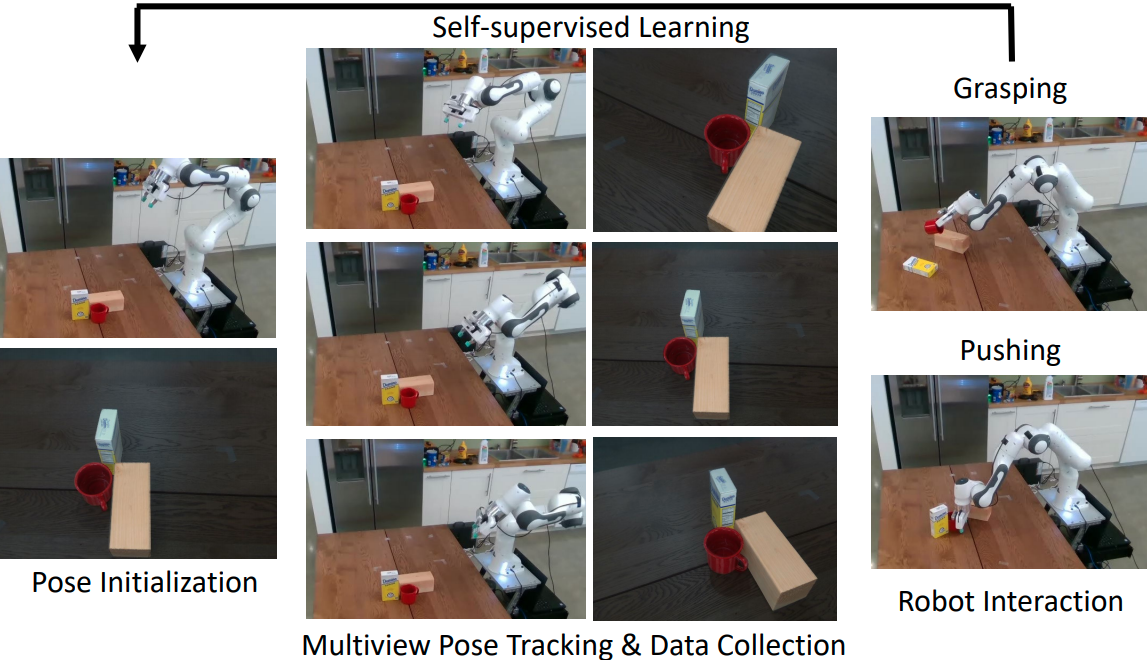

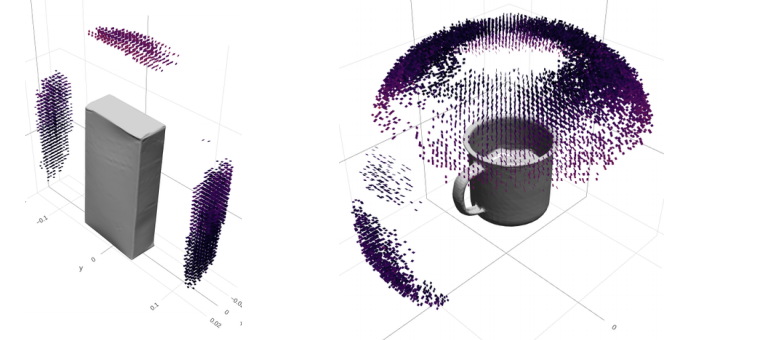

Self-supervised 6D Object

Pose

Estimation

for

Robot Manipulation

|

||

|

A Billion Ways to

Grasps -

An

Evaluation

of Grasp Sampling

Schemes

on a

Dense,

Physics-based Grasp

Data

Set |

||

|

The Best of Both

Modes:

Separately

Leveraging RGB and

Depth

for

Unseen

Object Instance

Segmentation

|

||

|

6-DOF

GraspNet:

Variational

Grasp

Generation for

Object

Manipulation |

||

|

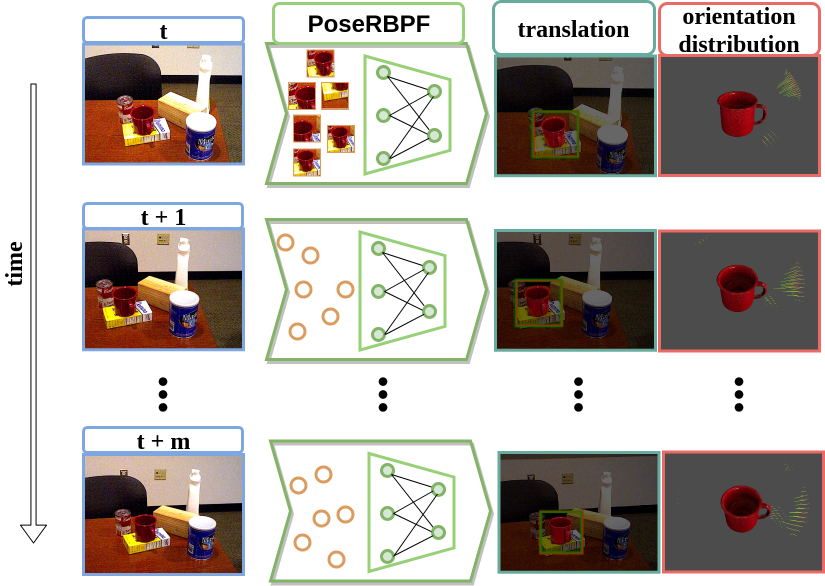

PoseRBPF:

A

Rao-Blackwellized

Particle

Filter for

6D Object

Pose

Tracking |

||

|

Visual

Represenatations

for

Semantic

Target

Driven

Navigation |

||

|

Synthesizing

Training

Data

for

Object

Detection

in

Indoor

Scenes |

||

|

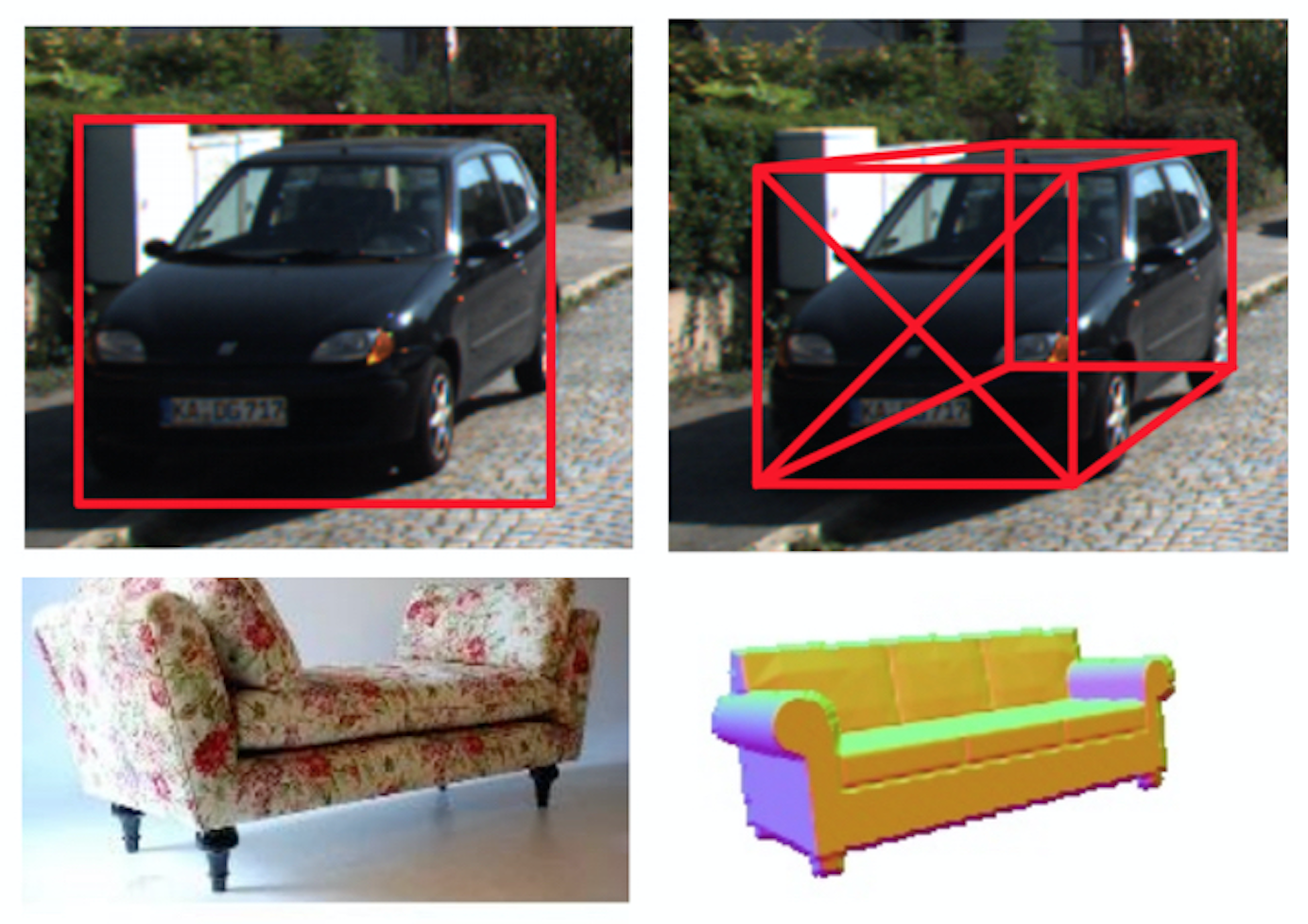

3D

Bounding

Box

Estimation

Using

Deep

Learning

and

Geometry |

||

|

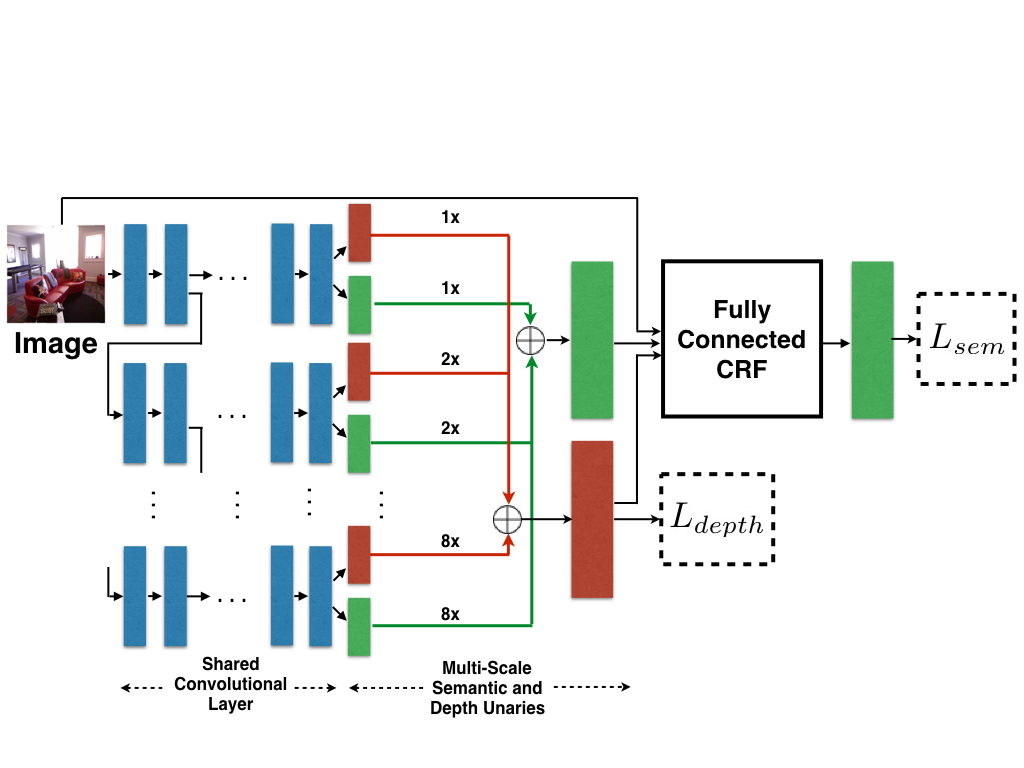

Joint

Semantic

Segmentation

and

Depth

Estimation

with

Deep

Convolutional

Networks |

||

|

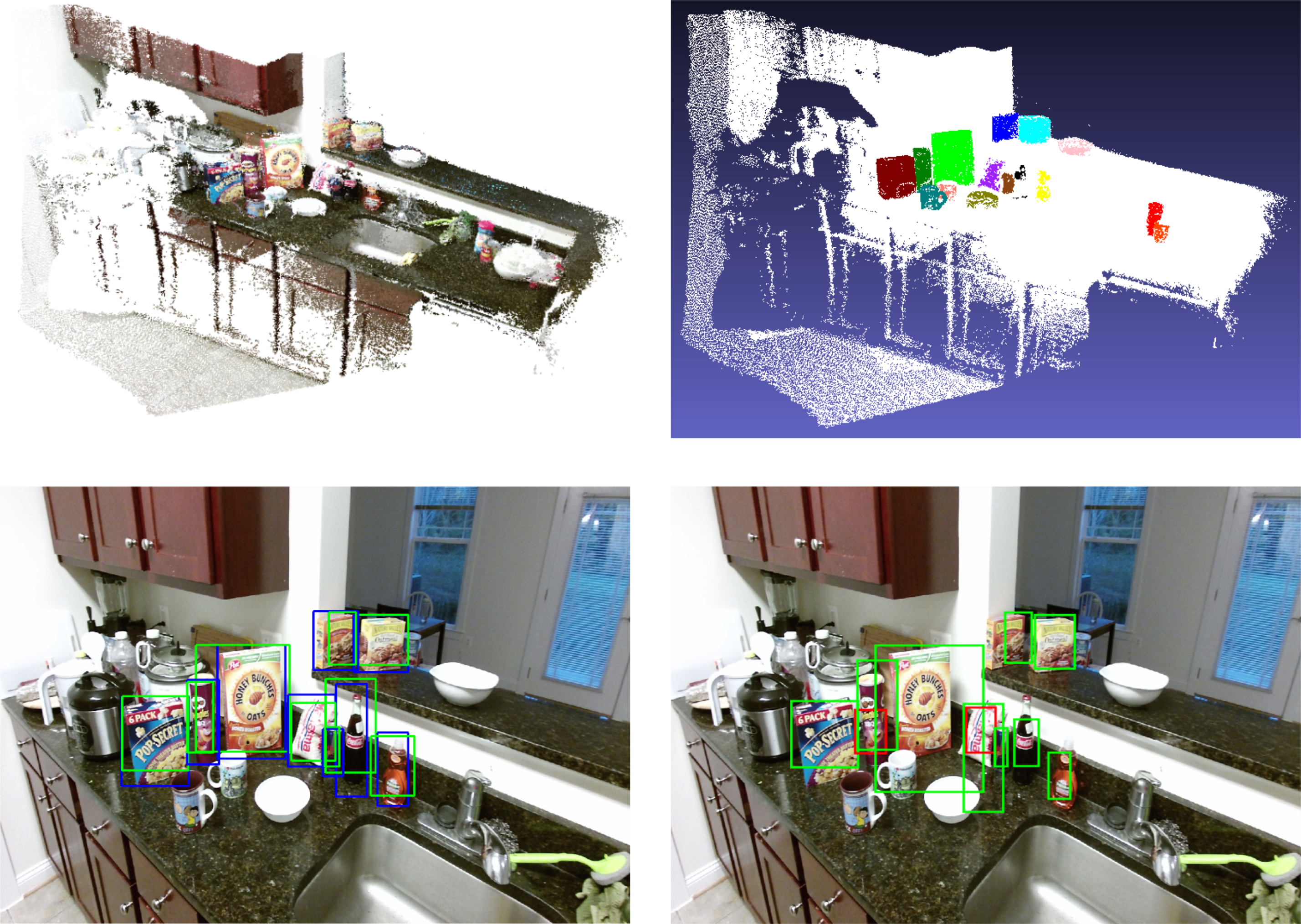

Multiview

RGB-D

Dataset

for

Object

Instance

Detection | ||

|

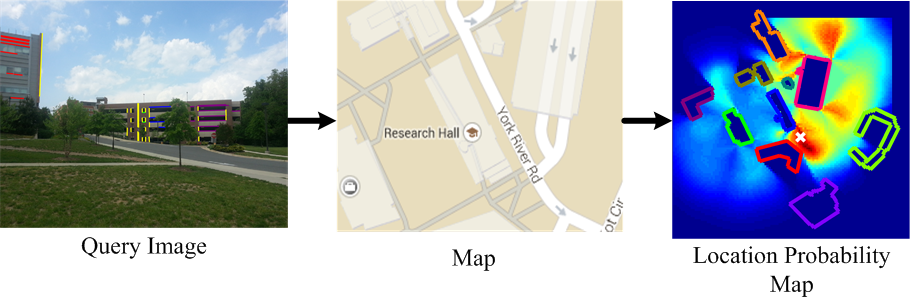

Semantic

Image

Based

Geolocation

Given

a

Map |

||

|

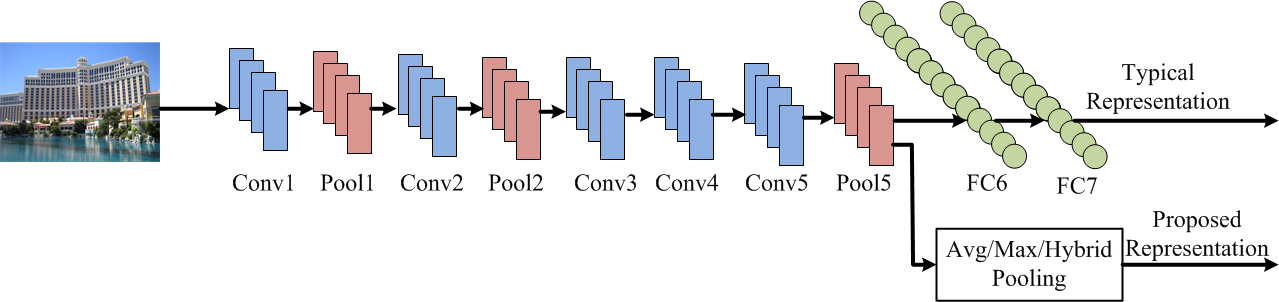

Deep

Convolutional

Features

for

Image

Based

Retrieval

and

Scene

Categorization |

||

|

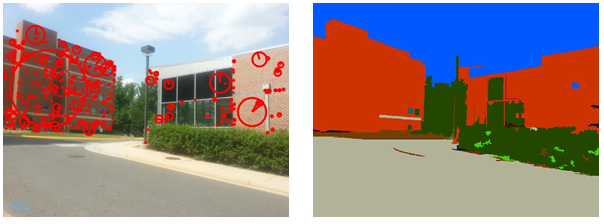

Semantically

Guided

Location

Recognition

for

Outdoors

Scenes |

||

|

Semantically

Aware

Bag-of-words

for

Localization |

||