Predicting Performance for Natural Language Processing Tasks

This is a post regarding our paper that will be presented at ACL 2020. tl;dr: You can use previously published results to get an estimation of the performance on a new experiment, before running it!

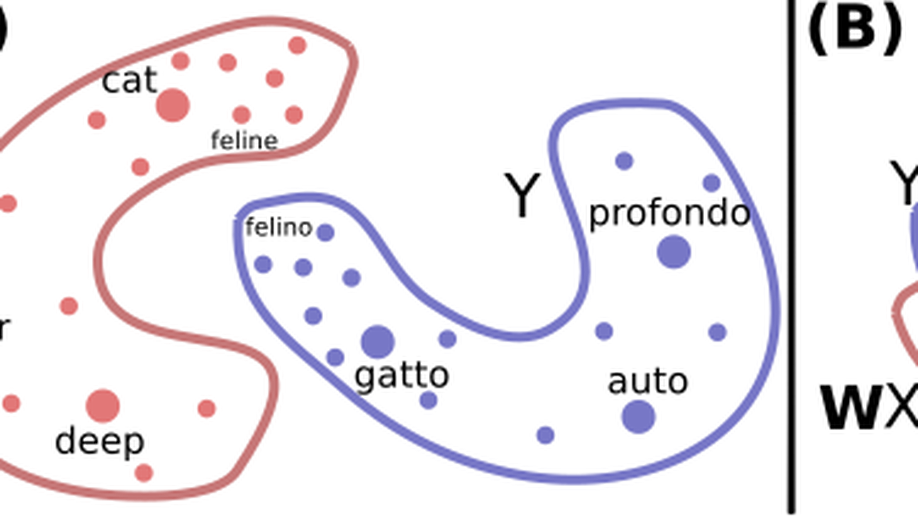

Should All Cross-Lingual Embeddings Speak English?

This is a post regarding our paper that got accepted at ACL 2020. Word embeddings are ubiquitous in modern NLP, from static ones (like word2vec or fasttext) to contextual representations obtained from ELMo, BERT, and other models.

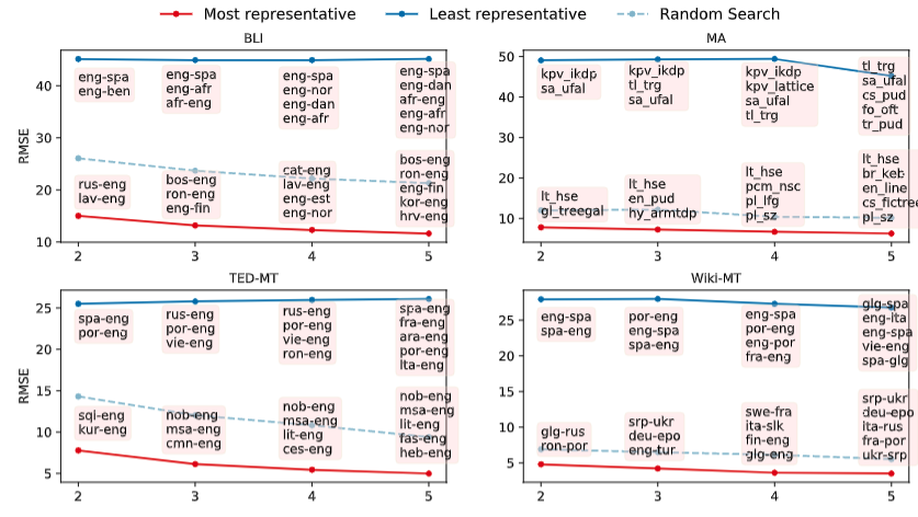

A note on evaluating multilingual benchmarks

A note on evaluating multilingual benchmarks Antonis Anastasopoulos, December 2019. tl;dr: Be careful when reporting averages for multilingual benchmarks, especially if making claims about multilinguality. In addition, averaging by language family can provide additional insights.