- When: Tuesday, October 19, 2021 from 11:00 AM to 12:00 PM

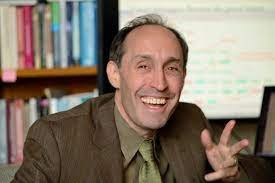

- Speakers: Jason Eisner, Johns Hopkins University

- Location: Research Hall 163

- Directions: Following University guidelines, if you are attending the on-campus Distinguished Lecture Series talk, you need to RSVP through the below link: https://docs.google.com/forms/d/e/1FAIpQLSc8hLGh9z--8_CO0VsVPWtMTdmOKLRu472BmEugJ05k2OPJmg/viewform?usp=sf_link Participants must complete Mason COVID Health✓™ and receive a “green light” status on the day of the event. Masks are also required for attendance

- Export to iCal

ABSTRACT

Suppose you are monitoring discrete events in real time. These might be users' interactions with a social media site, or patients' interactions with the medical system, or musical notes. Can you predict what events will happen in the future, and when? Can you fill in past events that you may have missed?

A probability model that supports such reasoning is the neural Hawkes process (NHP), in which the Poisson intensities of K event types at time t depend on the history of past events. This autoregressive architecture can capture complex dependencies. It resembles an LSTM language model over K word types, but allows the LSTM state to evolve in continuous time. I'll present the NHP model along with methods for estimating parameters (MLE and NCE), sampling predictions of the future (thinning), and imputing missing events (particle smoothing).

I'll then show how to combine the NHP with a temporal deductive database for a real-world domain, which tracks how possible event types and other facts change over time. Neural Datalog Through Time (NDTT) derives a deep recurrent neural architecture from the temporal Datalog program that specifies the database. This allows us to scale the method to large K by incorporating expert knowledge. We have also constructed Transformer versions of the NHP and NDTT architectures.

This work was done with Hongyuan Mei and other collaborators including Guanghui Qin, Minjie Xu, and Tom Wan.

BIO

Jason Eisner is Professor of Computer Science at Johns Hopkins University, as well as Director of Research at Microsoft Semantic Machines. He is a Fellow of the Association for Computational Linguistics. At Johns Hopkins, he is also affiliated with the Center for Language and Speech Processing, the Mathematical Institute for Data Science, and the Cognitive Science Department. His goal is to develop the probabilistic modeling, inference, and learning techniques needed for a unified model of all kinds of linguistic structure. His 150+ papers have presented various algorithms for parsing, machine translation, and weighted finite-state machines; formalizations, algorithms, theorems, and empirical results in computational phonology; and unsupervised or semi-supervised learning methods for syntax, morphology, and word-sense disambiguation. He is also the lead designer of Dyna, a new declarative programming language that provides an infrastructure for AI research. He has received two school-wide awards for excellence in teaching, as well as recent Best Paper Awards at ACL 2017, EMNLP 2019, and NAACL 2021.

Posted 3 years, 11 months ago